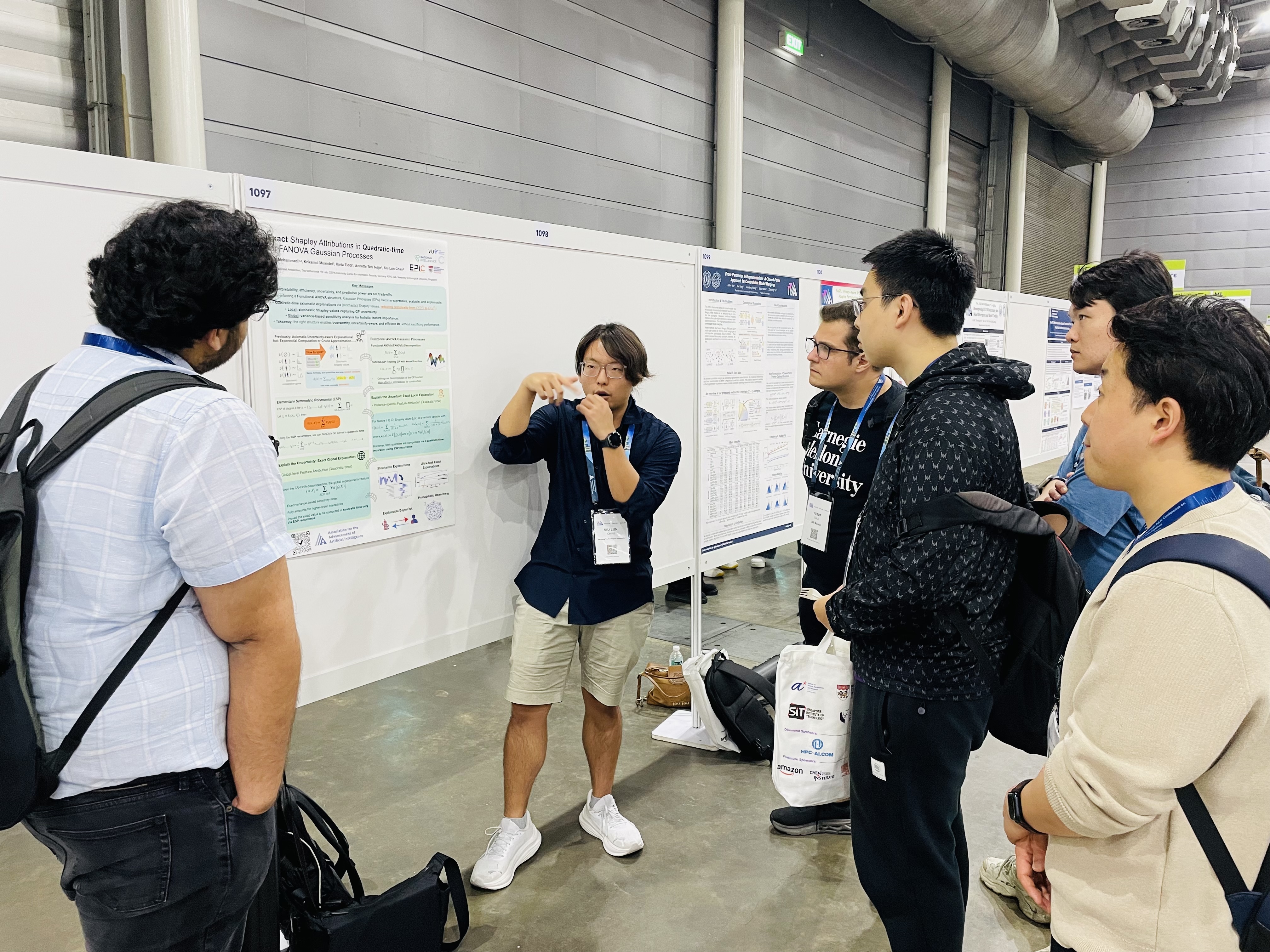

Epistemic Intelligence & Computation Lab

College of Computing and Data Science, Nanyang Technological University, Singapore

The Epistemic Intelligence & Computation (EPIC) Lab is dedicated to advancing the principles necessary to developing epistemically intelligent systems, i.e. machine learning systems that can recognise, reason about, and communicate the limits of their knowledge. To achieve this, our research spans foundational theory to translational applications, focusing on knowledge-level uncertainty, commonly referred to as epistemic uncertainty.

-

Mathematical Foundations of Epistemic Uncertainty: We investigate the mathematical and statistical structures underlying knowledge-level uncertainty. Our work examines formal representation frameworks (e.g., single probability measures, sets of distributions, and higher-order models), principled quantification strategies (e.g., information-theoretic and discrepancy-based characterisations), methods for comparison and geometry (e.g., probability metrics and statistical distances), and procedures for empirical verification (e.g., statistical testing and model criticism). This research draws on tools from probability theory, imprecise probability, Bayesian statistics, information theory, statistical inference and testing, and cooperative game theory. -

Algorithms for Epistemic Uncertainty-Aware Learning: We design and analyse machine learning algorithms that explicitly model, propagate, and exploit epistemic uncertainty across the learning pipeline. Our work focuses on uncertainty-aware predictive modelling (e.g., Gaussian processes, Bayesian neural networks, credal predictors, and conformal prediction methods), structured inference under incomplete or ambiguous information (e.g., causal, counterfactual, and strategic learning), and decision-making under uncertainty (e.g., active learning and Bayesian optimisation). Emphasis is placed on statistical validity, robustness to distributional shift, computational efficiency, and principled uncertainty communication, enabling learning systems to support reliable reasoning and decision processes in open-world settings. -

Epistemic Uncertainty-Aware AI Systems, Frameworks, and Applications: We operationalise our theoretical and algorithmic advances by developing uncertainty-aware AI systems and methodological frameworks for real-world deployment. Our work investigates how epistemic uncertainty can be leveraged to improve reliability, safety, and robustness in complex learning environments, with applications spanning interpretable and explainable models, out-of-distribution detection, robustness under distributional shift, incentive-aware and adversarial learning, AI safety, and foundation / language models. A central objective is the design of systems that can recognise the limits of their knowledge, adapt to novel conditions, and communicate uncertainty in a manner conducive to human oversight and decision-making.

Why are we doing this? We believe that, although modern machine learning systems have achieved remarkable success in predictive and generative tasks — demonstrating powerful abilities to model statistical variability and patterns — their capacity to reason about uncertainty beyond observed data regularities remains fundamentally limited. In particular, forms of uncertainty arising from ignorance, ambiguity, and broader unknown-unknowns are not adequately captured by purely pattern-driven approaches. As reliance on such systems continues to grow, these limitations may lead to deeper structural challenges for reliability, robustness, and safety.

This gap motivates our research, which seeks to treat uncertainty as a first-class object of study rather than a secondary by-product of prediction. Our goal is to develop rigorous theory and algorithms to enable learning systems to represent, reason about, and communicate the limits of their knowledge — making uncertainty more than merely an afterthought or a set of error bars.

If any of this resonates with you, feel free to reach out for collaboration. We are always excited to hear from you.

News

- EPIC Lab is coorganising the 2nd Epistemic Intelligence in Machine Learning Workshop at ICML2026, check out the website for further information.

- Yusuf Sale (LMU Munich, Germany) visited our group and had an inspiring research discussion with us!

- Prof. Fabio Cuzzolin (Oxford Brookes University, UK) visited our group and delivered a research seminar at NTU!